Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

The lines between AI competition, intellectual property, and national security are collapsing fast. On Thursday, Anthropic dropped a bombshell: three Chinese AI labs had been systematically extracting Claude’s capabilities through 16 million fraudulent queries – and the company named names. That story sits at the centre of today’s briefing, alongside a rare transparency move from Anthropic, a blowout earnings report from Micron, and a self-hosted intelligence tool that’s all over GitHub right now.

AI geopolitics isn’t a background story anymore. It’s the main event.

Anthropic published a detailed post on Thursday revealing it had identified “industrial-scale campaigns” by three Chinese AI laboratories – DeepSeek, Moonshot AI, and MiniMax – to steal Claude’s capabilities through a technique called model distillation.

The companies generated over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts, in breach of Anthropic’s terms of service and regional access restrictions.

Distillation itself is not illegal – frontier labs regularly use it to shrink their own models into smaller, cheaper versions. The problem here is using a competitor’s model to bootstrap capabilities that would otherwise take years and billions of dollars to develop independently. Anthropic’s bigger concern is what happens when those distilled models end up in military or surveillance systems without the safety guardrails the original was built with.

“Models built through illicit distillation are unlikely to retain those safeguards,” Anthropic wrote, citing bioweapons and cyber operations as specific risks. The company is calling for coordinated action from industry players, policymakers, and the global AI community.

CNBC reported that OpenAI has framed similar distillation behaviour as a national security threat – not just an IP dispute. That framing matters. It shifts the political leverage from commercial law into the harder-to-argue-against territory of national defence.

On the same day, Anthropic released Claude’s “new constitution” – a detailed, publicly available document that directly shapes Claude’s values and behaviour during training. It’s published under a Creative Commons CC0 licence, meaning anyone can use it freely.

The constitution covers how Claude balances helpfulness with safety, handles honesty and compassion in tension, and approaches situations where guidelines might conflict. Anthropic describes it as “written primarily for Claude” – a document intended to give the model the context it needs to act well, not just a list of rules for humans to read.

Publishing this is meaningful from a transparency perspective: it lets outsiders understand which Claude behaviours are intentional versus bugs, and holds Anthropic accountable to its own stated principles. Whether that accountability actually lands is a different question. But it’s more than most labs do.

Jack Conte, founder and CEO of Patreon, gave a pointed talk at SXSW this week. He’s not anti-AI – he was clear about that. But he’s done pretending the fair use argument holds up.

His argument: if training on creators’ work is legally fair use, why are AI companies cutting multi-million dollar deals with Disney, Conde Nast, Vox, and Warner Music? If they can just use the content freely, why pay the big rights holders at all?

The answer, Conte suggested, is that those companies have lawyers and leverage. Individual illustrators, musicians, and writers don’t – and that asymmetry is what AI companies are exploiting. This is becoming a mainstream policy flashpoint, not a niche creator complaint.

Bloomberg ran an analysis this week asking whether the current AI investment cycle is sustainable. The core tension: infrastructure spending is massive and accelerating, but monetisation at the application layer is still catching up. The classic setup for a bubble is capital flowing faster than revenue – and there are signs of that. But demand for compute and memory is real and quantifiable, not speculative. Micron’s earnings, just below, are the clearest data point on the “this is real” side of that argument.

Micron posted fiscal Q2 2026 results that surprised almost everyone. Revenue hit .86 billion against a consensus estimate of .07 billion. Earnings per share came in at .20 adjusted versus .31 expected. A year ago, the same quarter brought in .05 billion.

For next quarter, Micron guided to approximately .5 billion in revenue – up from .3 billion a year ago, implying growth of over 200%. CEO Sanjay Mehrotra put it directly: “The step-up in our results and outlook are the outcome of an increase in memory demand driven by AI, structural supply constraints and Micron’s strong execution across the board.”

The mechanism is simple. Every new generation of Nvidia GPU requires more high-bandwidth memory than the last. Micron makes that memory. If you want one metric to watch for whether the AI build-out is still happening, Micron’s quarterly results are it.

While the US debate focuses on IP, national security, and lab politics, China is doing something different: getting AI into the hands of ordinary people at scale. CNBC reported on how Baidu and Tencent are driving mainstream adoption across demographics that Western tech companies haven’t reached. If Chinese consumers embed AI into daily life faster than Western counterparts, it changes who benefits from widespread AI adoption long-term.

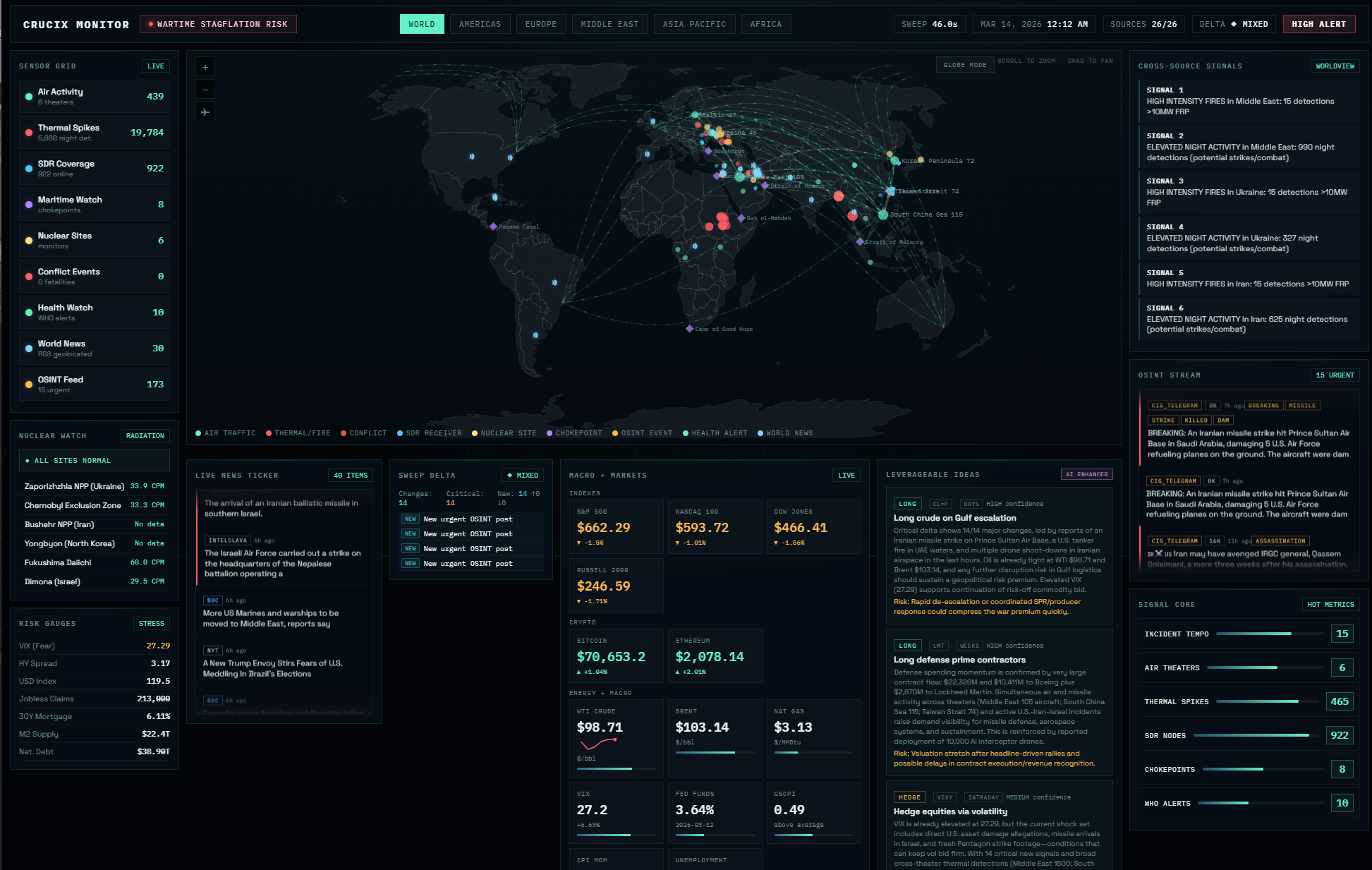

For the developers and privacy-minded folks in the room – Crucix is worth a look. It’s a self-hosted personal intelligence agent that pulls 27 open-source data feeds – satellite fire detection, flight tracking, radiation monitoring, conflict data, market prices, sanctions lists, and social sentiment – and renders everything in a Jarvis-style dashboard updated every 15 minutes.

Hook it up to an LLM and it becomes a two-way assistant: push alerts to Telegram or Discord when something changes, and query it with /brief for on-demand summaries. Zero cloud. One Docker command to spin up. The repo has been trending all week.

The Anthropic distillation story and the Patreon fair use argument are really the same story told from two different angles: who controls what AI gets trained on, and what happens when that control breaks down. At the frontier lab level, it’s espionage and national security. At the creator level, it’s unpaid labour and power asymmetry.

Micron’s numbers mean the infrastructure build-out isn’t slowing. Bloomberg’s bubble question is fair, but the demand signal is very real. And China’s consumer AI push suggests the downstream effects of all this spending will land unevenly across markets. This is a week where the geopolitical, commercial, and ethical threads of AI converge in the same news cycle.

Model distillation is a training technique where a smaller model is trained on the outputs of a larger one. It’s widely used legitimately by AI labs to create compact versions of their own models. The controversy arises when a competitor uses it to extract capabilities from another company’s model without permission, potentially bypassing safety guardrails in the process.

Anthropic named three specific Chinese AI labs – DeepSeek, Moonshot, and MiniMax – as responsible for over 16 million fraudulent queries designed to extract Claude’s capabilities. The concern isn’t just IP theft; it’s that distilled models may lack the safety systems of the original, creating risks if deployed in military or surveillance contexts.

Micron makes the high-bandwidth memory chips inside Nvidia GPUs. As each new GPU generation requires more memory, Micron benefits directly from accelerating AI infrastructure investment. Its Q2 2026 results – revenue nearly tripling year-on-year – are a strong signal that AI hardware demand remains robust.

Crucix is an open-source, self-hosted intelligence dashboard that aggregates 27 real-world data feeds including satellite imagery, flight tracking, radiation monitoring, and conflict data. It runs locally via Docker and can be connected to an LLM for natural language queries and automated alerts.