Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Lately, I have been thinking more seriously about where real business opportunities in AI might emerge. As a founder, I feel I need to be more sensitive to shifts in the market, especially when the rise of AI agents starts to weaken what used to be a strong moat. When the tools change, the strategy must change with them.

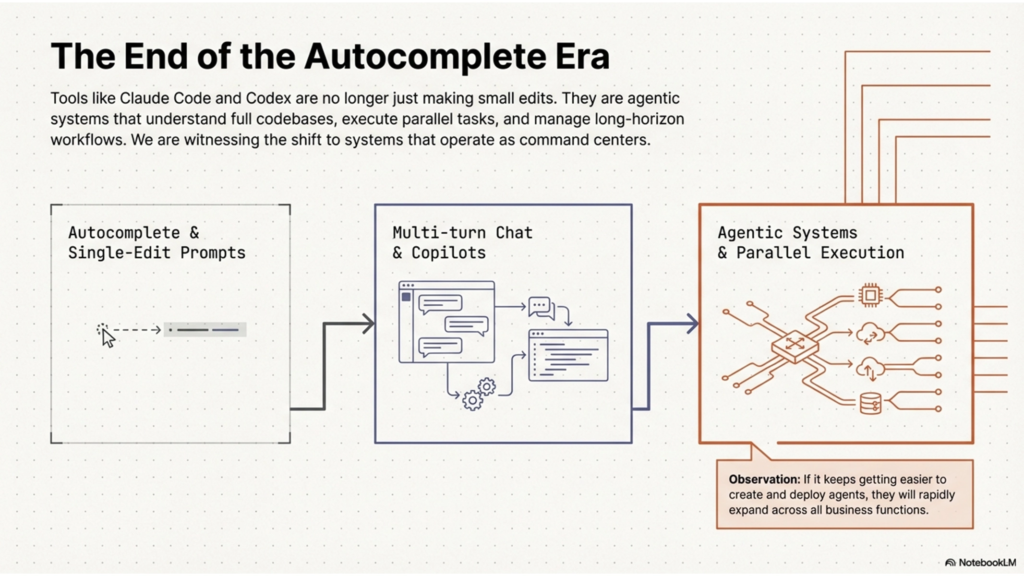

One reason this caught my attention is the rise of coding agents. Tools like Claude Code and OpenAI’s Codex are no longer just helping with autocomplete or small edits. They are being positioned as agentic systems that can understand a codebase, run tasks, and manage work across longer workflows. OpenAI even describes the Codex app as a “command center for agents,” designed for multiple agents and parallel work. That feels like a meaningful shift.

My founder takeaway is simple: if it keeps getting easier to create and deploy agents, then more agents will start appearing across more business functions. There will need to be an entire layer around them that helps them connect to tools, work reliably, and stay controllable. That is the category that started to stand out to me: AI agent infrastructure.

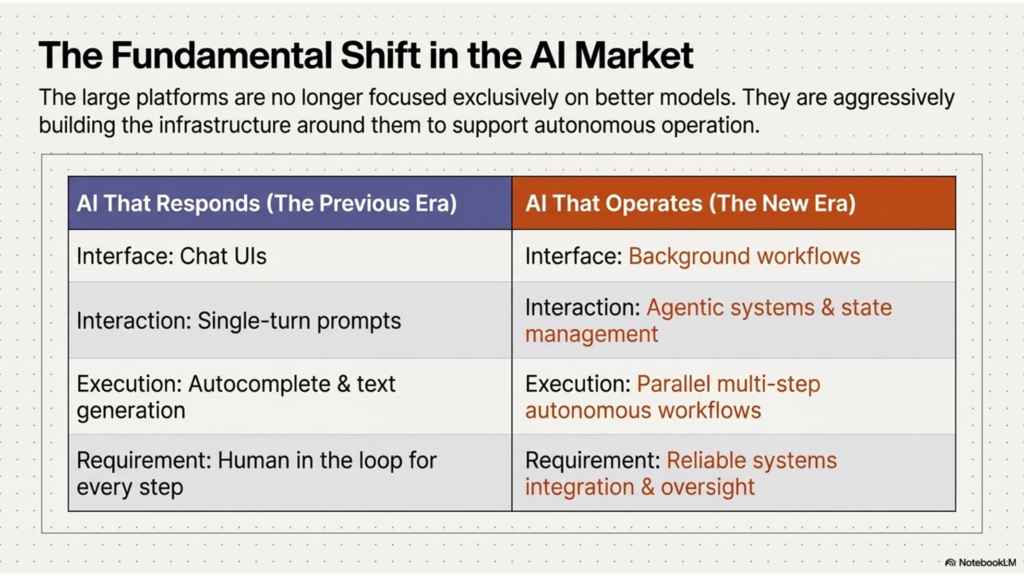

What makes this feel real is that the large platforms are no longer focused only on better models. They are increasingly building the systems around those models. OpenAI launched tools specifically for building agents, including built-in tools, orchestration via the Agents SDK, and observability for tracing workflows. MCP is now framed as an open standard for connecting AI applications to external systems. Google launched A2A as a protocol for agents to communicate and coordinate across systems. That combination suggests the industry is moving from “AI that responds” toward “AI that operates.”

To me, that is the interesting part. Standards, orchestration, and interoperability usually become important only when a category starts moving toward real deployment. Anthropic’s decision to donate MCP to the Linux Foundation’s Agentic AI Foundation makes that trend even harder to ignore.

The simplest definition I would use is this:

AI agent infrastructure is the technical layer that helps agents connect to the real world, execute tasks across systems, and remain reliable and controllable in production.

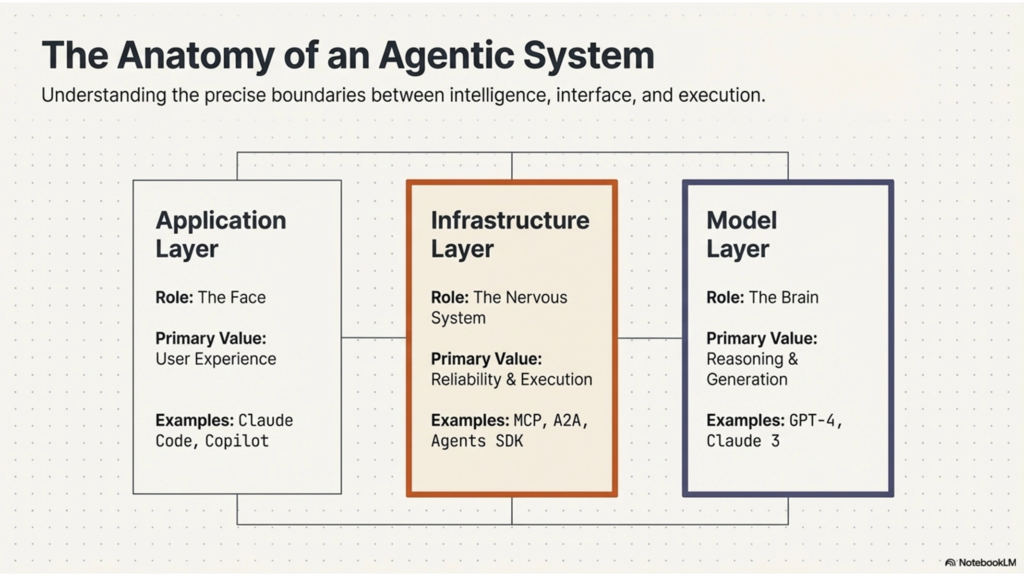

It is not the model itself, and it is not just the user-facing app. It is the layer in between. MCP describes itself as a standard for connecting AI applications to data sources, tools, and workflows. OpenAI’s agent stack similarly focuses on tools, orchestration, and observability rather than prompt-only interactions.

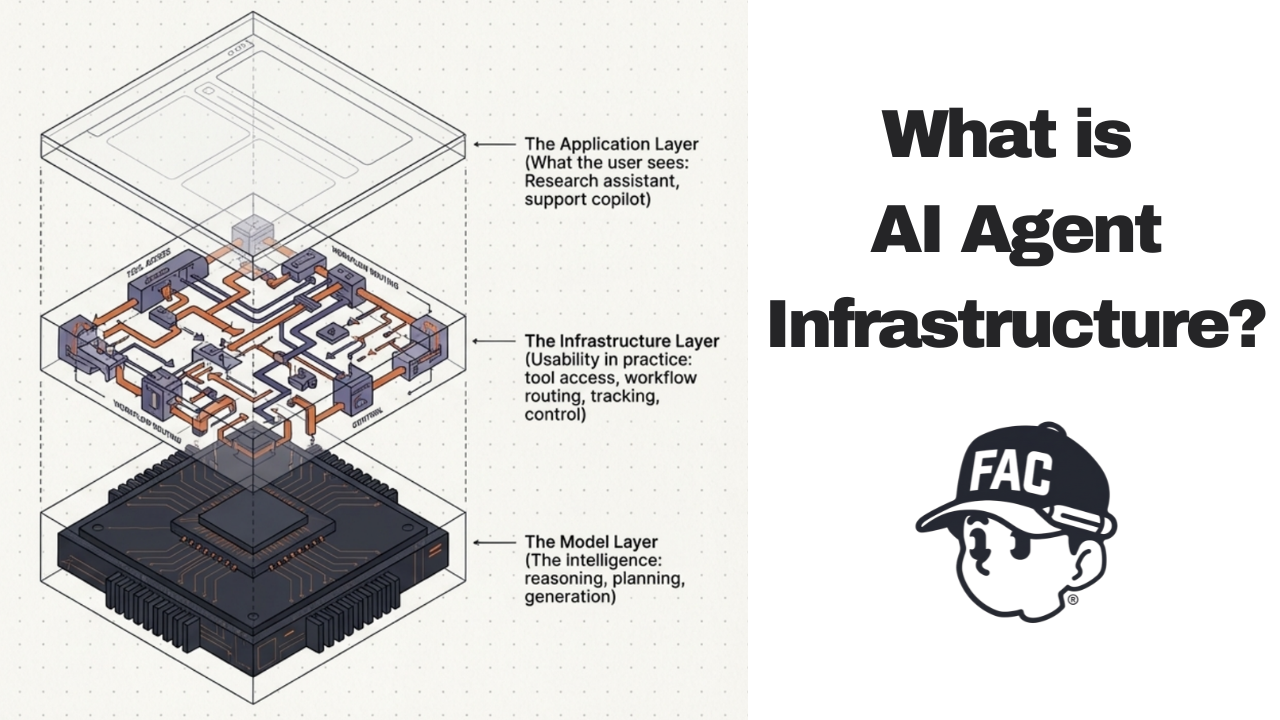

The easiest way to understand it is by separating the system into layers.

- The model layer is the intelligence. That is where reasoning, planning, and generation happen.

- The application layer is what the user sees. That could be a research assistant, support copilot, or coding product.

- The infrastructure layer is what makes the agent usable in practice. It gives the agent access to tools, routes multi-step workflows, tracks what happened, and applies control around what the agent can do. If the model is the brain, infrastructure is closer to the operating layer that makes the system function in the real world.

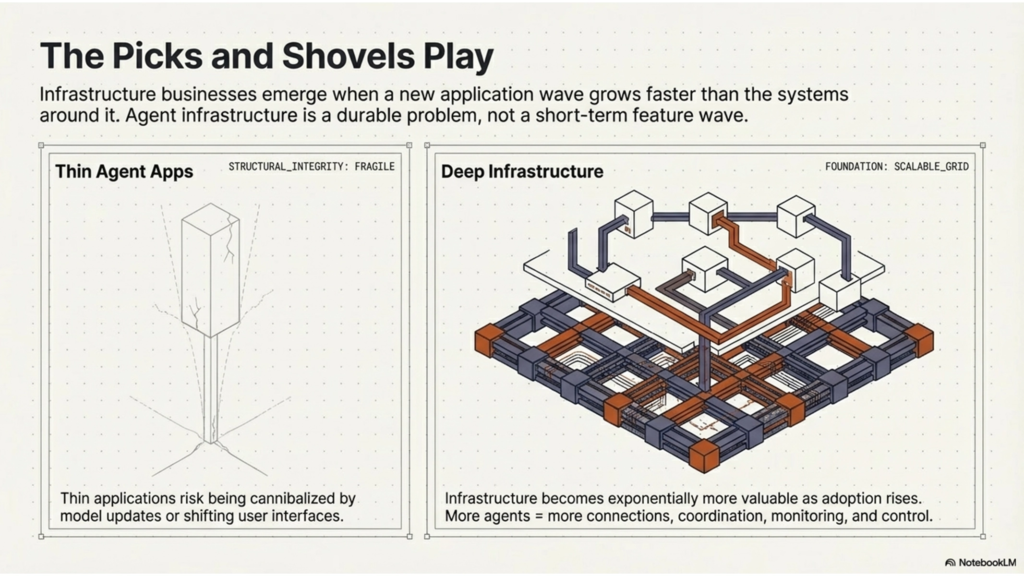

What makes this interesting from a startup perspective is that infrastructure businesses often emerge when a new application wave grows faster than the systems around it.

If more teams build agents, they will need more than model access. They will need ways to connect agents to business tools, coordinate multi-step work, inspect failures, apply permissions, and let different agents or systems communicate with one another. In that sense, agent infrastructure feels like a picks-and-shovels layer for a market that may expand quickly. That is my interpretation of the trend, not a guaranteed market outcome, but it is where my attention is going.

From the way I understand the space today, agent infrastructure can be broken into five practical buckets.

- Connectivity: This is the layer that helps agents access tools, APIs, files, databases, and business systems. MCP is the clearest example because it is built around standardizing those connections.

- Orchestration: Agents often need to complete more than one step. They may need to hand off work, route tasks, or manage state across a workflow. OpenAI’s Agents SDK is specifically described as a way to orchestrate single-agent and multi-agent workflows.

- Observability: Once an agent runs in production, teams need to know what happened. Which tool did it call? Where did it fail? Why did the output go wrong? OpenAI explicitly highlights integrated observability tools for tracing and inspecting agent workflow execution.

- Interoperability: If different agents and different enterprise systems need to work together, they need a common protocol. That is where A2A becomes relevant. Google launched it specifically so agents can communicate securely and coordinate actions across systems.

- Governance and control: The more useful agents become, the more companies will care about permissions, approvals, security, and oversight. This may end up being one of the most important parts of the stack because real adoption depends on trust just as much as capability. OpenAI’s current agent platform direction reflects that by emphasizing visibility and workflow control, not just raw model performance.

I am also fully aware that this is not an untouched category. There are already startups and platforms moving into different parts of this space. AgentMail, for example, is building email infrastructure for AI agents and describes itself as an email inbox API for agents rather than humans. At the same time, the broader ecosystem around MCP, agent gateways, orchestration, and observability is starting to fill up as more teams try to make agents usable in production. This is not necessarily a reason to avoid the space. If anything, it is a sign that the category is becoming real. The real question is not whether other players exist, but which part of the infrastructure layer is still painful enough, narrow enough, and valuable enough to build around.

What attracts me here is not simply that agents are popular. It is that this looks like the kind of layer that becomes more valuable as adoption rises.

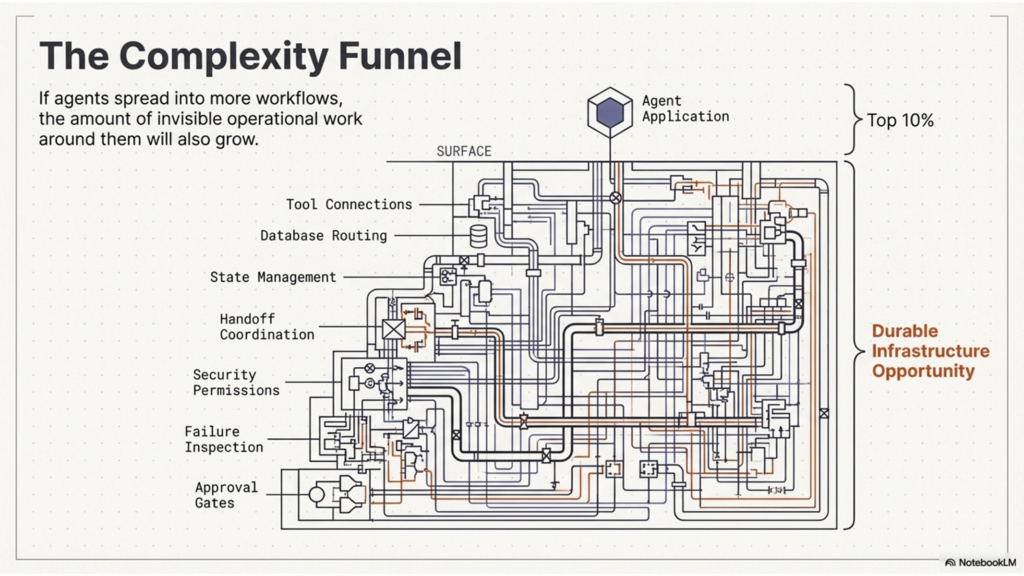

If agents spread into more workflows, the amount of invisible operational work around them will also grow. More agents means more connections, more coordination, more monitoring, and more control needs. That is why infrastructure stands out to me more than many thin agent applications. It feels closer to a durable problem than a short-term feature wave. This is still a founder hypothesis, but it is the reason the category feels worth studying seriously.

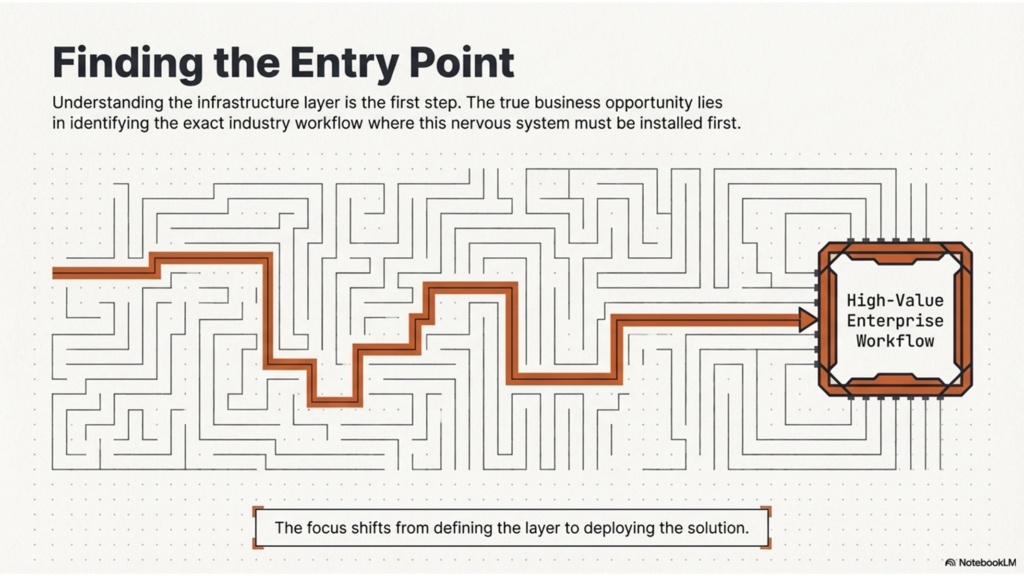

This article is really my first step: understanding what agent infrastructure is and why it may matter.

The next step is more practical. I want to look at which industry or workflow is the right place to apply agent infrastructure. Because understanding the layer is one thing. Finding the right entry point for a real business is something else entirely.

That is what I want to explore in my next article please stay tuned.

[…] This article follows What is AI Agent Infrastructure […]