Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

An AI coding assistant is software that uses a large language model to suggest, generate, review, or refactor code on a developer’s behalf. In 2026 these tools are no longer a curiosity. They sit inside editors, terminals, and pull request pipelines across most professional teams in the UK, and the data on how much they change a working day has finally caught up with the hype.

This guide pulls together what the evidence actually says, which tools matter, and how British organisations from HMRC to Monzo are putting them to work.

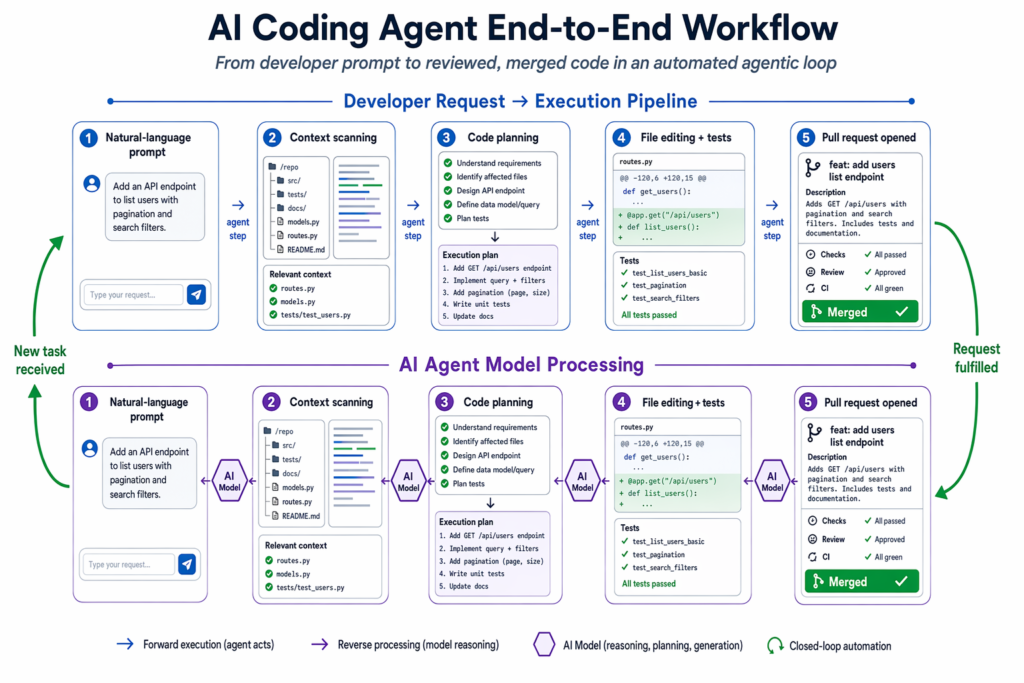

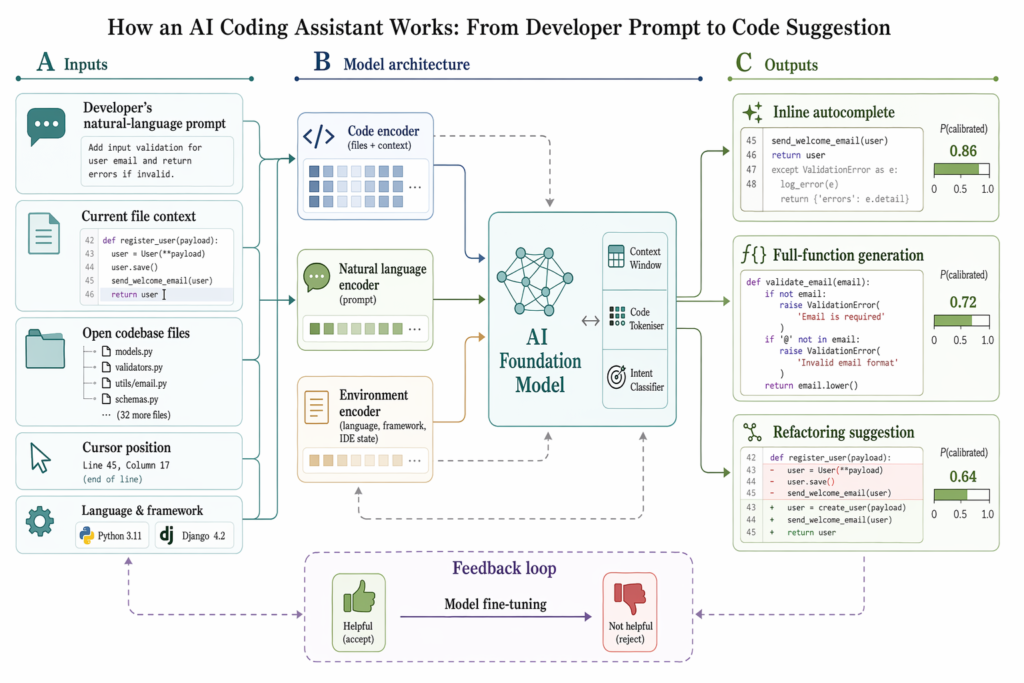

An AI coding assistant is a tool that pairs a large language model with deep access to your codebase, so it can write, complete, refactor, review, or run code on your behalf. The simplest versions complete the next few lines as you type. The most advanced ones, often called agents, can read dozens of files at once, run terminal commands, execute your tests, and submit a finished pull request.

The category covers three loose tiers: inline completions, chat assistants that answer questions about your code in plain English, and agentic tools like Claude Code, Cursor’s Composer, and Windsurf’s Cascade that take a goal and execute changes across multiple files with limited supervision. The shift in 2025 and 2026 has been the move from autocomplete to autonomy. According to Stack Overflow’s 2025 Developer Survey, 84% of developers were using or planning to use AI tools in their workflow, up from 76% the year before.

Developers using GitHub Copilot complete coding tasks 55% faster on average, cutting typical task time from 2 hours 41 minutes to 1 hour 11 minutes, according to a controlled GitHub and MIT study on Copilot’s productivity impact. That is the headline figure most vendors quote, and it holds up in independent research with one important caveat: the gains are uneven across tasks.

The most striking field data comes from the UK public sector. In the UK Government AI Coding Assistant Trial run between November 2024 and February 2025, civil servants saved an average of 56 minutes per day, roughly 28 working days per year. Crucially, only 15% of AI-generated code was accepted without modification. Developers are getting strong first drafts that they edit, refactor, and ship.

GitHub’s own telemetry suggests Copilot now generates an average of 46% of a developer’s code, reaching 61% in Java projects. Stack Overflow’s 2025 survey found 51% of professional developers globally use AI tools daily. AI-authored code accounted for 26.9% of all production code in the November 2025 to February 2026 period, up from 22% the previous quarter.

CTO research across 450+ companies, summarised on ShiftMag, found that while 92.6% of developers used an AI coding assistant at least monthly, overall productivity gains sat at around 10%, not 50%. The gap between individual task speed and organisation-wide throughput is where the real implementation work happens.

There is no single winner, and any honest list reflects that most professionals now use two or three tools for different jobs. The most influential products this year are:

GitHub Copilot is the default for most teams, with over 20 million users and the deepest GitHub ecosystem integration. It runs as a VS Code extension or inside GitHub.com, with a Workspace mode for agentic multi-file tasks. Pricing: free, $10 a month Pro, $19 per user per month Business.

Cursor, made by Anysphere, is the fastest-growing AI developer tool in history, hitting $2 billion in annualised revenue by February 2026 with more than 1 million paying customers. It is a standalone AI-native IDE forked from VS Code, and its Composer mode is the gold standard for one-prompt multi-file feature generation. Pro costs $20 a month.

Claude Code is Anthropic’s terminal-native agent. It reads and modifies files, runs commands, executes tests, and handles the multi-file refactoring that breaks most other tools. Senior engineers reach for it when reasoning matters more than speed.

Windsurf (the renamed Codeium) keeps the best free tier on the market and supports 70+ languages across 40+ IDEs including Vim, Emacs, and JetBrains products that other vendors ignore. Its Cascade agent is competitive with Cursor’s Composer.

Tabnine has pivoted into the regulated-enterprise niche. It is the only major assistant that supports a fully air-gapped on-premise deployment, with zero code retention guaranteed. Pricing starts at $39 per user per month.

Three more deserve a mention. Amazon Q Developer is built into the AWS ecosystem and unbeatable for CloudFormation or CDK work. Replit Agent turns natural-language prompts into deployed apps from the browser. OpenAI’s Codex / ChatGPT has re-emerged as a serious agent-first option in 2026 with GPT-5.5.

The use cases that survive contact with a real codebase fall into a small number of categories. Boilerplate and scaffolding generation is still the highest-return starting point: spinning up CRUD endpoints, test files, configuration scaffolds, and component stubs in minutes rather than hours. A Monzo backend engineer scaffolding a new Go microservice with authentication, logging, and error handling is a typical example.

Multi-file refactoring is where agentic tools earn their keep. Migrating a legacy Python 2 service to Python 3, renaming a function used across forty files, or updating an API contract across the entire repository are jobs where Claude Code, Cursor Composer, or Windsurf Cascade can flatten what used to be a multi-day exercise.

Automated test generation is another reliable win. Cursor’s Composer can scaffold an entire React test suite with mocked API calls and accessibility checks. Tabnine and Amazon Q Developer go further with pull request review agents that flag missing input validation, dodgy error handling, or security smells before code lands in main.

Two more cases matter. Junior developers use these tools to navigate unfamiliar codebases, asking Claude Code to explain a sprawling Rails monorepo in plain English. UK founders build functioning MVPs over a weekend with Replit Agent and hand the code to an engineer for hardening. According to GitHub’s 2025 developer survey data, 40 to 47% of developers report that AI tools let them spend more time on system design rather than boilerplate. That is the real prize.

Not without review. The Cloud Security Alliance found that up to 62% of AI-generated code contains design flaws or known security vulnerabilities in independent testing. By April 2026, 84% of developers reported using AI coding tools daily, yet only 29% said they fully trusted AI-generated code for production without manual review.

The UK government trial makes this concrete. Only 15% of AI-generated code was accepted unmodified. The other 85% was edited, reshaped, or rewritten before being committed. That is not a failure of the tools; it is a feature of using them well.

Three guardrails apply in practice:

Despite the security caveats, 72% of UK public sector users in the government trial agreed AI coding assistants offered good value, and 58% said they would not want to return to pre-AI working conditions.

The UK has produced some of the most useful real-world deployment data anywhere. The most important case study is the government trial: between November 2024 and February 2025, the Government Digital Service and DSIT distributed 2,500 licences across 50+ central government organisations, with 1,100 GitHub Copilot Business licences redeemed. The trial validated that AI coding assistants are mature enough for regulated environments when paired with clear policy and review.

HMRC is the other landmark deployment. The tax authority has rolled out Microsoft 365 Copilot to staff with plans to scale from 32,000 to 50,000 licences by 2026, according to Computer Weekly’s reporting on the HMRC rollout. It is one of the largest public-sector AI tool deployments in Europe and gives the UK an unusually rich evidence base for how these tools behave inside large, conservative organisations.

Monzo is the most interesting private-sector example. The digital bank estimates roughly 20% of its new code will be AI-generated, and the engineering team treats AI enablement as a centralised platform responsibility rather than a free-for-all. Privacy guardrails, data-access controls, and approved-model lists sit alongside the tooling itself. Monzo’s trial framework has become a template that other UK fintechs are quietly copying.

The UK AI code assistant software market was valued at roughly USD 360 million in 2024, according to Global Data Route Analytics, and the broader UK generative AI coding assistants segment is projected to grow at a 26.8% CAGR through 2030, per Grand View Research. British organisations are not just experimenting; they are budgeting for the long run.

Start with three honest questions: where does your team work day to day, what are your compliance obligations, and which workflows would benefit most from automation?

If your team lives in GitHub and VS Code, GitHub Copilot is the lowest-friction starting point. If you want the most powerful in-IDE experience and developers will switch editors to get it, Cursor is the strongest choice. For heavy multi-file refactoring or terminal-first work, Claude Code is unmatched. For broad IDE support or a generous free tier, Windsurf wins.

Compliance changes the calculation. Financial services, healthcare, and any organisation that cannot let source code leave its perimeter should look at Tabnine’s air-gapped option first, with GitHub Copilot Enterprise or Amazon Q Developer as cloud-hosted alternatives. Stack Overflow’s 2025 AI survey results confirm data residency and code retention are now leading purchase factors at enterprise scale.

Run a structured trial. Pick a small group, set an eight-week evaluation window, and measure quantitative metrics (cycle time, PR throughput, test coverage) alongside qualitative ones (satisfaction, willingness to continue). Monzo and the UK government both used this approach and ended up with defensible, evidence-based rollouts rather than vibes-driven adoption.

The direction of travel is clear. More pull requests will be opened by agents, with humans concentrated on architecture, review, and integration testing. That shift towards agency will drive consolidation: by 2027, the same dynamics that reduced cloud infrastructure to a handful of hyperscalers will probably leave four or five dominant coding platforms. Tabnine will own the regulated-industry niche; Replit will own education; Amazon Q will own the AWS stack. Everyone else will need a clear reason to exist.

Running alongside that is the rising premium on AI fluency as a working skill. The biggest predictor of who actually gets the 55% time saving from Copilot is not which tool they pick. It is the developer’s habit of prompting, reviewing, and iterating well.

In the UK, the government trial, the HMRC deployment, and bank-led adoption at Monzo give developers an unusually clear picture of how to deploy these tools responsibly. The question for 2026 is not whether to adopt an AI coding assistant. It is which two or three to adopt, and how to wire them into your team’s workflow without losing the engineering discipline that made the codebase worth working on in the first place.

What is the best AI coding assistant in 2026?

There is no single winner. GitHub Copilot wins on ecosystem integration and breadth, Cursor on raw AI-native IDE power, Claude Code on complex reasoning and refactoring, and Windsurf (formerly Codeium) on free-tier value. Most professional developers run two or three tools, picking the right one for each task rather than committing to a single vendor.

Will AI coding assistants replace software developers?

No. AI handles repetitive and boilerplate tasks, freeing developers for architecture, problem solving, business logic, and stakeholder collaboration. The role is evolving, not disappearing. The developers who use AI tools well will displace those who do not, but the headcount story across the industry remains one of growth, not contraction.

Is AI-generated code safe to use in production?

Only with human review. Independent research found up to 62% of AI-generated code contains design flaws or security vulnerabilities, and only 29% of developers say they trust AI output without checking it. Treat AI as a first-draft tool, always review before committing, and use AI-powered review agents as an additional safety net.

Which AI coding assistant is best for privacy and enterprise compliance?

Tabnine, for organisations that need fully air-gapped on-premise deployment with zero code retention. GitHub Copilot Business and Enterprise, plus Amazon Q Developer, are strong cloud-hosted alternatives for regulated industries that can accept a major cloud provider as a processor. All three commit to not training their models on customer code.

How much do AI coding assistants cost?

Pricing ranges from free (Windsurf individual, GitHub Copilot free tier, Amazon Q free) through $10 to $20 a month for individual professional plans (GitHub Copilot, Cursor Pro, ChatGPT Plus), up to $39 to $59 per user per month for enterprise tiers like Tabnine. Most teams find that a $10 to $20 plan pays for itself within the first week of use.