Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

“Agentic AI” is the latest phrase to escape the AI lab and land in the boardroom, and unlike most jargon it is doing real work. Gartner named it the number one strategic technology trend for 2025, and PwC’s 2025 AI Agent Survey reports that 79% of organisations have at least some AI agent implementation underway. This guide gives a clear, honest picture of what is new, what is overhyped, and how to start building if it makes sense for your organisation.

Agentic AI is an autonomous AI system that can set its own sub-goals, plan a path to achieve them, take actions using external tools, and learn from the results, all with minimal human supervision. AWS frames it crisply: an agentic system “can act independently to achieve pre-determined goals without constant human oversight,” in contrast to traditional AI which needs step-by-step guidance.

The word “agentic” comes from “agency”, meaning the ability to act in a goal-driven manner. That is the core distinction from a chatbot. A chatbot answers what you ask. An agentic system gets given a goal (reconcile last month’s expense reports, investigate this support ticket, find a flight under £200 and book it) and works on it until done or stuck.

Three properties make a system agentic: goal orientation (it works towards an outcome, not a fixed script), tool use (it can call APIs, query databases, browse the web, and run code), and reflection (it checks its own work and changes course on failure). If a system is a hard-coded flowchart that just calls an LLM at one step, that is automation with sprinkles, not agentic AI.

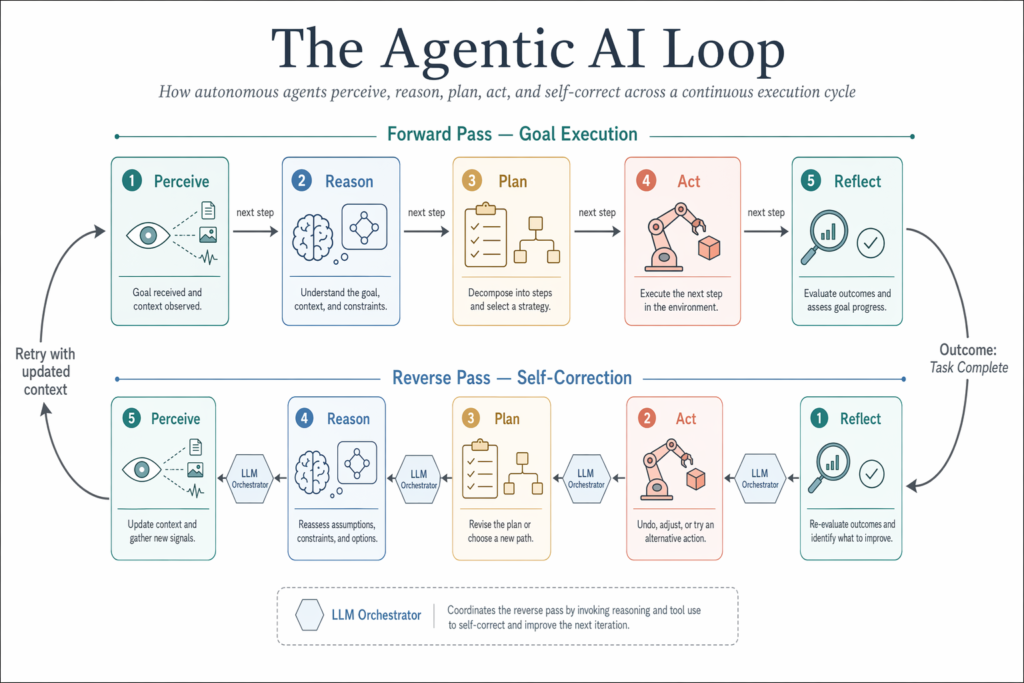

Google Cloud describes the agentic loop as a five-stage cycle: Perception, Reasoning, Planning, Action, and Reflection. Walking through each stage explains why these systems behave so differently from older automation.

Perception is the agent gathering information about its current state. That might be reading an email, pulling a CRM record, scraping a webpage, or querying a vector store. Reasoning is where the LLM takes over: a model such as GPT-4o, Claude Opus 4, or Gemini 2.5 sits at the centre as the “brain”, interpreting the situation. Planning breaks the goal into ordered steps. Action executes those steps by calling tools. Reflection evaluates the result and decides whether to continue, retry, or escalate.

Red Hat highlights something that is easy to miss: agentic workflows can move forwards and backwards. They backtrack and self-correct when a step fails, which is the bit traditional pipelines cannot do. This is also why Model Context Protocol (MCP) matters. MCP is an emerging open standard that lets agents connect securely to external data sources and tools in a uniform way, removing the bespoke glue code that has slowed enterprise rollouts.

In production deployments, you almost never see a single agent. IBM notes that in a multi-agent system, each agent performs a specific subtask, with their efforts coordinated through an AI orchestrator. The orchestrator handles workflow tracking, resource usage, memory, data flow, and failure events. Think of it as a team lead who never sleeps.

This is the comparison everyone gets wrong, and it costs companies real money in misaligned procurement.

Generative AI creates content (text, images, code, audio) when prompted. It is reactive. You give it an instruction, it produces an output, and the loop ends. ChatGPT in its original 2023 form was pure generative AI.

Agentic AI uses generative AI as its reasoning engine but adds autonomy. As Google Cloud puts it, agentic AI uses LLMs as a brain to perform real-world actions in underlying systems to achieve higher-level business and operational goals. It is a superset: anything generative AI can do, agentic AI can do, plus take action.

A concrete example. Ask generative AI, “Draft a refund email for this customer.” It writes the email and stops. Ask agentic AI, “Resolve this customer’s refund.” It reads the ticket, checks the warranty status in your CRM, verifies the return is within policy, drafts the email, processes the refund through Stripe, updates the case in Salesforce, and notifies the warehouse. Same underlying model, very different operating envelope.

This is also why the market is moving so fast. Precedence Research estimates the global agentic AI market grew from USD 5.25 billion in 2024 to USD 7.55 billion in 2025, and Mordor Intelligence projects a 44 to 46% CAGR through 2030. The action-taking layer is where the spending is heading.

Sloppy vocabulary has muddled this. An AI agent is a single autonomous entity that perceives its environment and takes actions. Agentic AI is the broader concept and platform layer that enables agents to operate, often involving multiple specialised agents coordinated by an orchestrator.

You can have an AI agent without agentic AI infrastructure (a small Python script with one LLM and one tool counts). You cannot really have agentic AI without agents, because they are the units of work. Salesforce’s Agentforce documentation puts it well: agents in a multi-agent system communicate with each other, share insights, hand off tasks, and coordinate via a meta-agent.

In practice, when a vendor says “agentic AI platform” they mean the orchestration, memory, governance, and tooling layer. When they say “AI agent” they usually mean a specific role-bound agent (a sales development agent, a tier-one support agent, a security triage agent) deployed on top of that platform.

The hype-to-reality gap is narrower than you might expect. Six categories are pulling away from the pack.

Customer service. Salesforce Agentforce is being deployed in e-commerce settings where an agent handles a faulty-product return end to end: classifying the issue, checking warranty in the CRM, processing the return, updating systems, and notifying the warehouse. Nevermined AI’s enterprise adoption research reports up to an 86% reduction in human task time for multi-step workflows of this kind.

Software development. Claude Code (from Anthropic) and GitHub Copilot Workspace both support end-to-end agentic coding. The agent reads a Jira ticket, navigates the repository, makes the change, runs the tests, and opens a pull request. This is now table stakes for engineering productivity at companies such as Shopify and Atlassian.

Healthcare. Hospital systems deploy agents that monitor electronic health record streams, flag deteriorating patients, and auto-draft escalation messages to the responsible clinician.

Supply chain. Retailers run agentic systems that detect weather disruptions, reroute shipments to alternative distribution centres, and update the ERP without human input. Walmart and Maersk have both publicly discussed pilots in this space.

IT operations. An agent watches network traffic and login patterns, isolates suspicious accounts, and triggers a security review with a pre-drafted report. UiPath’s Agentic Automation product is the most visible commercial example, layering AI reasoning on top of established RPA pipelines.

Financial trading. Quantitative hedge funds deploy LLM-powered agents that parse earnings call transcripts in real time and adjust positions within milliseconds of key disclosures.

Gartner predicts that 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. That is a real adoption curve, not a marketing chart.

The toolchain has consolidated faster than most expected. There is now a clear split between foundation model providers, enterprise platforms, and open-source frameworks.

On the foundation model side, OpenAI (GPT-4o, o3, ChatGPT Agents), Anthropic (Claude Opus 4, Sonnet 4), and Google (Gemini 2.5, Vertex AI Agent Builder) are the three providers most teams evaluate. Claude is widely used as the reasoning backbone in agentic frameworks, including Anthropic’s own Claude Code and many third-party agents.

On the enterprise platform side, the main contenders are:

On the open-source side, three frameworks dominate. LangChain and LangGraph is the de facto standard for LLM-powered agents, with LangGraph adding stateful multi-agent workflows. AutoGen (Microsoft Research) focuses on multi-agent conversation patterns. CrewAI uses a role-based metaphor (researcher, writer, reviewer) that maps naturally onto specialised teams.

The right choice depends on where you are starting. Already deep in Salesforce or Microsoft? Pick the platform agent. Building from scratch with a strong engineering team? LangGraph or CrewAI on top of Claude or GPT-4o is the most flexible path.

The wave of demos has obscured a sobering data point. Gartner forecasts that more than 40% of agentic AI projects will be cancelled by 2027 because of governance gaps and unclear ROI. The risks cluster into four buckets.

Unintended actions. An agent with broad permissions can do real damage at machine speed. The fix is least-privilege access by default. Give each agent only the specific tools and data it needs for its role, and audit those grants.

Hallucinated tool calls. The model invents an API that does not exist or fabricates parameters. Mitigate by validating every tool call against a strict schema before execution and rejecting anything that does not match.

Loops and runaway costs. Agents can get stuck reasoning forever, burning tokens. Cap iteration counts, set spend ceilings per task, and route any task that hits the cap to a human reviewer.

Compounding errors across long chains. Even a 95% accurate step becomes 60% accurate after ten of them. Keep agentic workflows scoped, insert human-in-the-loop checkpoints at high-stakes decisions, and instrument every action so failures are visible.

The pattern that works in 2026 is what PwC calls supervised autonomy. Agents do the work. Humans set the goals, approve the high-stakes steps, and review the outcomes. PwC’s survey found that 93% of IT leaders plan to introduce autonomous agents within two years, and the ones reporting success almost universally have governance frameworks in place before they scale.

Agentic AI is not the preserve of well-funded enterprises. Open-source frameworks and pay-as-you-go cloud APIs mean a viable agentic prototype can be built for under £100 a month in API costs. Here is the route that consistently works.

Step 1: Pick a single workflow. Do not start by building a general-purpose platform. Pick one painful, well-defined workflow (invoice triage, lead qualification, on-call incident first response) where inputs, outputs and success metrics are clear.

Step 2: Choose a foundation model. For most use cases, Claude Sonnet 4 or GPT-4o gives the best ratio of reasoning quality to cost. Use the bigger models (Claude Opus 4, o3) only if your initial tests show genuine reasoning failures.

Step 3: Pick a framework. Red Hat and most enterprise teams default to LangGraph for production work because of its explicit state graph. CrewAI is faster for prototyping role-based teams. AutoGen suits scenarios where you need agents talking to agents.

Step 4: Define tools narrowly. Each tool should do one thing, take typed parameters, and have a clear failure mode. Resist giving agents a generic shell or unrestricted database access.

Step 5: Add memory and state. Use a vector store (Pinecone, Weaviate, pgvector) for long-term memory. Implement agentic RAG, where the agent decides when and what to retrieve, rather than baking retrieval into every step.

Step 6: Instrument everything. Log every tool call, model response, and state change. AgentOps tooling exists precisely because regular APM is not enough.

Step 7: Pilot, measure, then scale. Run for four to six weeks, compare against a control workflow, and only then expand.

The organisations getting real value from agentic AI in 2026 are not the ones with the biggest budgets. They are the ones disciplined enough to start narrow and build governance in from day one.

What is agentic AI in simple terms?

Agentic AI is an AI system that can set its own goals, plan how to achieve them, take actions using tools, and learn from the results, all with minimal human supervision. Think of it as an AI that does things, not just answers questions.

What is the difference between agentic AI and generative AI?

Generative AI creates content (text, images, code) when prompted. Agentic AI goes further: it uses generative AI as its reasoning engine, but can autonomously plan, use tools, call APIs, and complete multi-step tasks to achieve a real-world goal, without waiting for a human after each step.

What is the difference between an AI agent and agentic AI?

An AI agent is a single autonomous entity that perceives its environment and takes actions. Agentic AI is the broader concept and technology platform that enables agents to operate, often involving multiple specialised agents coordinated by an orchestrator.

Is agentic AI safe?

Agentic AI introduces real risks: agents can take unintended actions, accumulate permissions, or make errors at scale. Safe deployment requires human-in-the-loop checkpoints, least-privilege access controls, solid monitoring, and clear governance frameworks.

How do I build an agentic AI system?

Start with a foundation model (such as GPT-4o, Claude, or Gemini), pick an agent framework (LangChain, AutoGen, or CrewAI), define the tools and APIs the agent can call, implement memory and state management, then add orchestration logic. Begin with a scoped pilot workflow before scaling.