Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

If you’ve used ChatGPT, you’ve already met the cousin of an AI agent. The agent is the bit that takes the conversation further: it doesn’t just answer, it acts. It books the meeting, files the ticket, scrapes the data, sends the follow-up email. For the first time in software, you can describe a goal in plain language and have a system pursue it across multiple steps, on its own.

That shift is why every major cloud provider, consultancy and enterprise software vendor is talking about agents. The global AI agents market was valued at $7.63 billion in 2025 and is projected to reach $182.97 billion by 2033, growing at a compound annual rate of 49.6 percent (Source: Grand View Research, 2025). It’s one of the fastest-growing technology categories on record.

This guide explains what an AI agent actually is, how one works under the bonnet, where the real business value sits, and what beginners should and should not expect. No jargon walls, no hype. Just the bits you need.

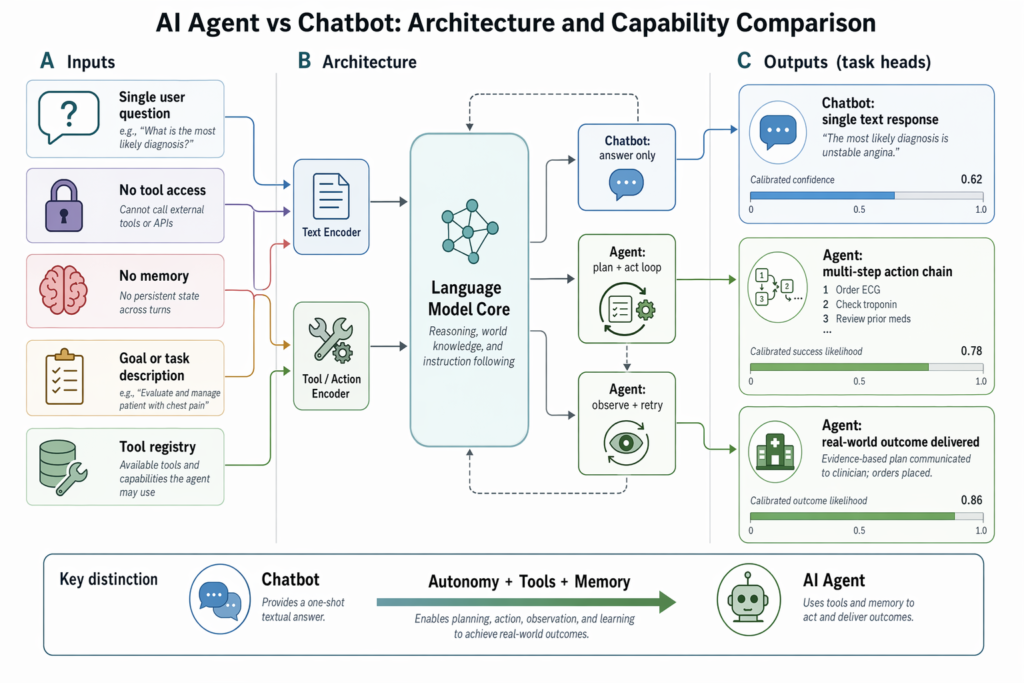

An AI agent is a software system that pursues a goal on your behalf, using a large language model as its reasoning engine and a set of tools to take real-world actions. It can plan, decide, act, observe the result, and adjust, all without you prompting it at every step (Source: Google Cloud, 2025).

Think of a regular chatbot as a clever assistant on a phone line. You ask, it answers, the call ends. An agent is closer to an intern with access to your tools. Give it a brief like “draft a competitor analysis on three rivals and email it to my manager by 5pm” and it will search the web, read pages, summarise findings, format a document and send the email, looping back only when it gets stuck.

Four ingredients make this possible: a persona or role definition that frames its job, memory so it can track what it’s already done, tools it can call such as search APIs or a code interpreter, and the underlying language model that does the thinking (Source: Google Cloud, 2025). Strip any one of those out and you’re back to a chatbot.

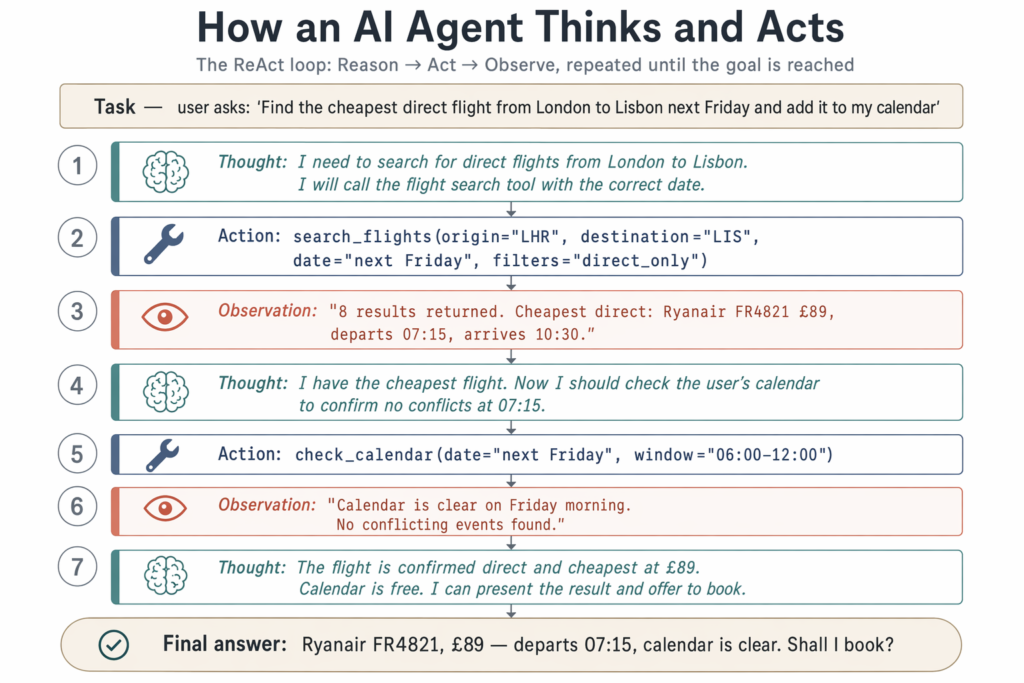

Under the bonnet, most modern agents run a loop called ReAct, short for Reasoning and Action. The model thinks about what to do next, picks a tool, executes it, reads the result, and decides the following step. That cycle repeats until the goal is met or a stopping condition triggers (Source: Google Cloud, 2025).

Imagine asking an agent to find the cheapest direct flight from London to Lisbon next Friday. Step one: it reasons that it needs flight data and picks a search tool. Step two: it queries the API and reads back ten options. Step three: it filters out connecting flights, sorts by price, and returns the winner. If the API returns an error, the agent notices, retries, or asks you for clarification.

The reasoning engine is almost always a large language model: Anthropic’s Claude, OpenAI’s GPT family, or Google’s Gemini. These models support structured tool use, meaning they can output a clean JSON instruction like “call the calendar API with these parameters” rather than just generating prose (Source: IBM, 2025). That single capability is what unlocked the current agent boom.

A chatbot processes one instruction at a time and waits for your next message. An AI agent sets its own sub-goals, calls external tools, holds memory across steps, and completes multi-step workflows with minimal human intervention (Source: Forbes, 2025). The gap is autonomy and action.

Here’s a useful way to picture it. ChatGPT is a brilliant tutor sitting behind a desk. You ask questions, it answers, you copy the answers somewhere else, you do the work. An AI agent is a colleague with access to your inbox, browser and codebase. You hand it the task, walk away, and check on progress.

In practice the line is blurring. ChatGPT now ships with browsing, code execution and connectors: agent-like behaviours. But the deeper distinction is architectural. Agents are designed to plan, retry, persist state and chain dozens of tool calls. Chatbots are designed for conversation. If a system can autonomously complete a job requiring five or six different actions across different applications, it’s an agent.

AI agents are usually grouped by how they make decisions, from simple rule-followers up to systems that learn over time. The classic taxonomy covers simple reflex agents, model-based reflex agents, goal-based agents, utility-based agents, and learning agents (Source: IBM, 2025). Most modern LLM-powered agents combine elements of the last three.

A second axis worth knowing is single-agent versus multi-agent. In a multi-agent system, several specialised agents collaborate: one researcher, one writer, one reviewer, passing work between themselves (Source: Google Cloud, 2025). This is the architecture behind many of the more impressive demos you’ll have seen, often labelled “agentic AI”. Klarna, for instance, now runs multi-agent pipelines across customer service and internal operations, treating individual agents as interchangeable workers in a coordinated team.

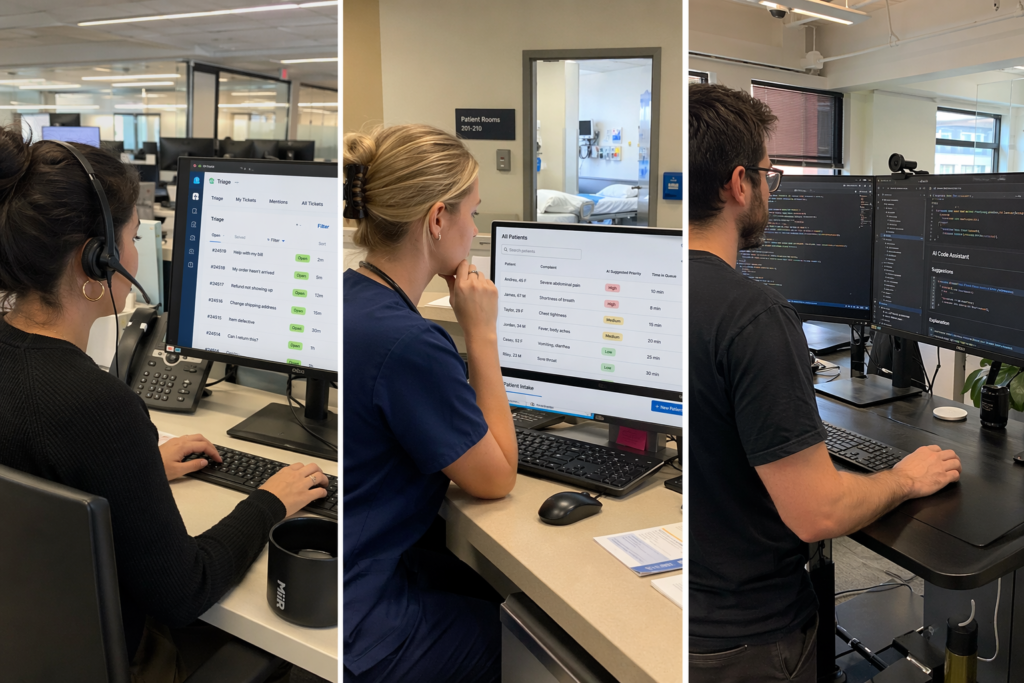

Agents are already deployed at serious scale, particularly in customer support, financial services, retail and software engineering. Customer service tops the list, with 45.8 percent of companies citing it as their primary agent use case (Source: Nevermined, 2025). Intercom’s Fin AI Agent resolves over half of incoming support conversations without a human stepping in.

Financial services has moved fastest at the enterprise end. Around 70 percent of financial institutions are already using AI agents for fraud detection and risk analysis (Source: Nevermined, 2025). JPMorgan Chase runs agents that flag fraudulent transactions, automate parts of loan approval, and assist legal and compliance teams (Source: MIT Sloan, 2025). In retail, 63 percent of retailers use agents for marketing, inventory and customer service, and Walmart has built LLM-powered agents to handle personal shopping and merchandise planning (Source: Nevermined, 2025).

Healthcare is quietly catching up. Around 42 percent of EU hospitals now use AI agents for diagnosis and triage, with another 19 percent planning adoption shortly (Source: Nevermined, 2025). Software engineering is the loudest frontier, with autonomous coding agents like Devin from Cognition AI writing, testing and shipping code end-to-end, and GitHub Copilot Workspace turning issue descriptions into full implementation plans.

For builders, the agent landscape splits into open-source frameworks and managed platforms. The most widely adopted open-source frameworks are LangGraph, AutoGen and CrewAI. LangChain, the broader ecosystem behind LangGraph, has crossed 116,000 GitHub stars, and LangGraph itself sits at around 19,200 stars, reflecting how quickly developers have rallied around graph-based agent design (Source: LinkedIn, 2025).

LangGraph is the heavy lifter: stateful, controllable, built for production workflows. Microsoft’s AutoGen specialises in multi-agent conversations where agents delegate tasks to one another. CrewAI takes a friendlier role-based approach; you define a Researcher, a Writer and a Reviewer, and the framework wires them up. On the managed side, the OpenAI Agents SDK and Assistants API give you memory, code interpretation and file search out of the box. Google Vertex AI Agent Builder, Amazon Bedrock Agents and IBM watsonx Orchestrate target enterprise teams wanting governance, scale and cloud data integration.

For non-developers, Microsoft Copilot Studio is the most accessible entry point, letting business users design agents that plug into Microsoft 365 and Dynamics with little or no code. Salesforce Agentforce takes a similar approach for sales and service teams. Major vendors including Microsoft, Salesforce, Google and IBM are now embedding agent capabilities directly into the software you already pay for (Source: MIT Sloan, 2025). For most businesses, the first agent they encounter will simply appear inside an existing product.

No. While the most powerful agents are still built by engineers, a wave of no-code and low-code tools means anyone with a clear use case can build a working agent in an afternoon. Microsoft Copilot Studio, Salesforce Agentforce, and CrewAI’s higher-level interfaces let you configure agents using natural language rather than Python (Source: IBM, 2025).

A sensible beginner path looks like this. Pick one annoying, repeatable task. Choose a platform that already integrates with the tools that task touches. Copilot Studio is the obvious pick if you live in Microsoft 365. Write down the steps a human would take, in order. Configure the agent to follow those steps, granting access only to the systems it strictly needs. Test it on five real cases, watch where it fails, and tighten the instructions.

The bigger constraint is rarely technical; it’s process clarity. Agents struggle when humans can’t describe the workflow themselves, when source data is messy, or when the task involves judgement calls the business has never written down. The teams who succeed with agents tend to be the same ones who were already good at documenting how they work.

Agents make mistakes, and giving them tools means those mistakes can have real consequences. They hallucinate facts, misinterpret goals, get stuck in loops, and occasionally take actions a human would never sanction. Best practice involves human checkpoints, particularly for irreversible steps: sending external emails, moving money, or deleting records.

Adoption is racing ahead of governance. By 2025, 88 percent of organisations were using AI in at least one business function, up from 78 percent the year before, and 62 percent were either experimenting with or actively scaling agentic AI (Source: McKinsey, 2025). A further 82 percent of enterprises plan to integrate agents into operations within three years (Source: Nevermined, 2025). Funding is following the curve: AI agent startups raised $3.8 billion in 2024, almost three times the prior year (Source: Nevermined, 2025).

The other limitation worth flagging is brittleness. Agents perform well on common patterns and stumble on edge cases, particularly when a tool returns unexpected data or a website redesigns its layout. Treat your first agent as a junior team member: useful, promising, and in need of supervision. Start with low-risk tasks, log every action, and expand the remit only as you build confidence.

Agents aren’t a replacement for thoughtful product design, clean data, or competent humans. They’re an amplifier. Like any amplifier, they make good systems better and bad systems worse. The companies pulling ahead in 2026 are treating agents as a serious operating model question, not a feature toggle.

No. ChatGPT is a chatbot interface to a large language model. An AI agent uses a language model as its brain but adds memory, tools and a planning loop so it can take multi-step actions on your behalf. ChatGPT now includes some agent-like features, but a true agent is built around autonomy, not conversation.

They can be, with the right guardrails. Use platforms that support read-only modes, scoped permissions and human approval steps for high-risk actions. Avoid handing an agent unrestricted access to anything irreversible until you’ve watched it run dozens of low-stakes tasks and you trust its behaviour.

Costs vary widely. Hobbyist agents on open-source frameworks can run for a few pounds a month in API charges. Enterprise platforms like watsonx Orchestrate or Vertex AI Agent Builder are priced per seat or per workflow and can scale into significant figures. The biggest cost driver is usually the number of language model calls per task, so well-designed agents that plan efficiently are cheaper to run.

A multi-agent system uses several specialised agents that collaborate: a researcher, a writer and a reviewer, for instance. You probably don’t need one for your first project. A single well-scoped agent handles most beginner use cases. Multi-agent designs become useful when a workflow has clearly distinct roles or when one model isn’t strong enough to do everything alone.

Most agents today automate specific tasks within a role, not the whole job. The realistic near-term picture is human-agent collaboration: the agent handles repetitive workflow steps, the human focuses on judgement, relationships and exceptions. Roles heavy in routine knowledge work will change fastest, but redesign rather than wholesale replacement is the dominant pattern so far.