Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

If you’ve used ChatGPT, asked Gemini for a recipe, or watched a Sora video on your timeline, you’ve already met generative AI. But what is generative AI underneath the marketing? It’s a category of artificial intelligence that creates new content (text, images, audio, video, code) by learning patterns from enormous datasets and producing original outputs in response to your prompts.

This guide covers what generative AI is, how it works, the main types, the leading models in 2026, the things you can actually do with it, and the misconceptions worth dropping. No jargon walls. No fluff. Just the working understanding you need.

Key Takeaways

Generative AI is a branch of artificial intelligence that produces original content (text, images, video, audio, software code, and synthetic data) in response to user prompts, rather than classifying or retrieving existing information. It learns probabilistic patterns from massive training datasets, then uses those patterns to generate new outputs that resemble, but do not copy, the data it was trained on.

The clearest way to see the difference: traditional, discriminative AI answers “what is this?” and labels a photo as a cat or flags an email as spam. Generative AI answers “make me one of these”: write the email, draw the cat, compose the music, ship the code.

IBM defines generative AI as “artificial intelligence that can create original content (text, images, video, audio or software code) in response to an instruction or message from a user.” That’s the cleanest one-line definition you’ll find, and it captures the key idea: creation, not retrieval.

The breakthrough that made it all possible was the transformer architecture, introduced in Google’s 2017 paper Attention Is All You Need, according to MIT News (2023). Transformers let models pay attention to context across long passages of text. That’s what unlocked the leap from clunky chatbots to fluent, useful assistants.

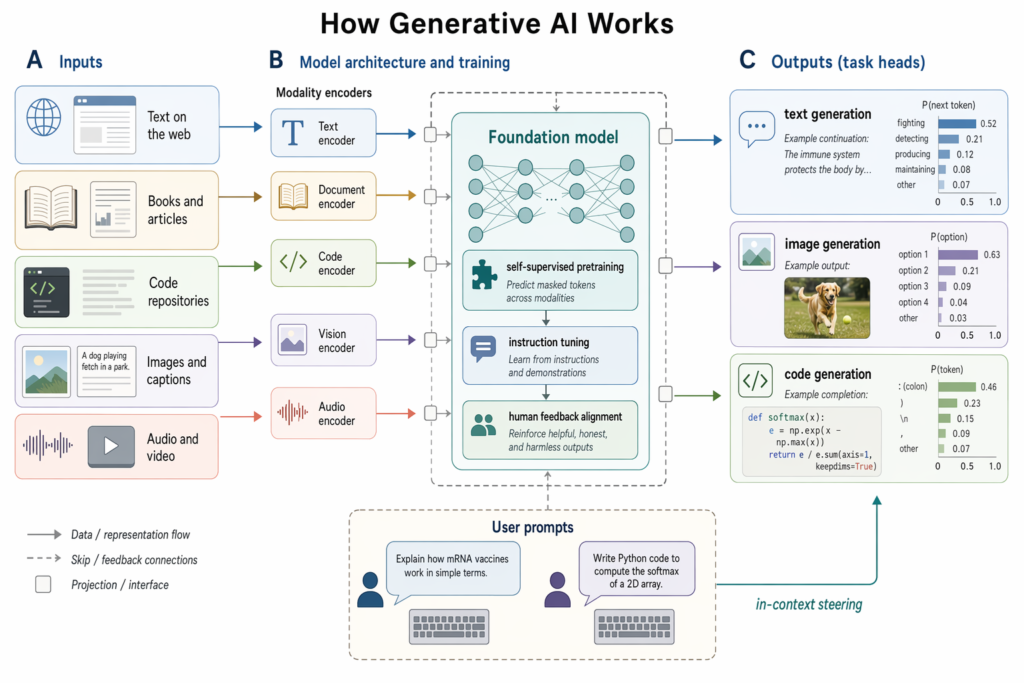

Generative AI works in three phases, according to IBM (2025): training a foundation model on vast amounts of unlabelled data, tuning it to a specific task, and continuously refining it through generation and evaluation. The model learns statistical patterns during training, gets specialised during tuning, and improves with feedback once deployed.

Here’s the loop in plain English:

When you type a prompt, the model isn’t looking up an answer. It’s predicting, token by token, the most probable next piece of output based on everything it has learned. That’s why responses feel fluent, and also why they sometimes confidently invent things. We’ll come back to that.

The main types of AI within the generative family are grouped by the kind of output they produce: text, images, audio and music, video, and code. Each uses a different underlying architecture optimised for that modality, though most modern frontier systems are multimodal, handling several at once.

The core architectures you’ll keep hearing about:

Most of the tools you actually use sit on top of these. ChatGPT is an LLM with image and voice extensions. Midjourney is a diffusion model with a chat interface. Sora is a video diffusion model with an LLM front end.

The leading generative AI models in 2026 span a small group of frontier vendors plus a fast-growing open-source ecosystem. OpenAI, Anthropic, Google DeepMind, Meta, and a wave of Chinese labs, notably DeepSeek, currently set the pace. Here’s what’s worth knowing:

The democratisation story matters here. Open-source foundation models such as Meta’s Llama let developers build generative AI applications without bearing the cost of pre-training (IBM, 2025). You can now run a capable model on a laptop, which would have sounded absurd three years ago.

For deeper coverage of any single model, see best generative AI models compared.

You can use generative AI to draft writing, design images, write and review code, summarise long documents, translate content, generate audio and video, and increasingly, automate multi-step tasks through AI agents. The exact value depends on who you are and what you’re trying to ship.

Here’s a grounded look at real use cases by audience:

These generative AI use cases are not hypothetical. 92% of Fortune 500 companies have already adopted generative AI, including Coca-Cola, Walmart, Apple, and Amazon, according to 2024 industry data from Master of Code. And 53% of C-suite leaders now interact with generative AI tools at work regularly, ahead of mid-level managers at 44% (McKinsey, 2024).

The economic prize behind all this activity is considerable. McKinsey’s Global Institute estimates generative AI could add $2.6 to $4.4 trillion in annual economic value across 63 use cases, roughly equivalent to the UK’s entire 2021 GDP.

The biggest misconceptions about generative AI are that it’s a smarter search engine, that its outputs are always accurate, that you need to be a data scientist to use it, that all models are basically the same, and that it creates from nothing. None of these hold up under scrutiny.

Let’s go through them:

Knowing what generative AI isn’t matters as much as knowing what it is. The hype cycle has trained many people to expect either magic or a parlour trick. It’s more useful and more constrained than either camp suggests.

What’s next for generative AI is the shift from generation to action: agentic AI. Generative models produce content; AI agents use that content to plan and execute multi-step tasks autonomously, booking travel, running research, managing inboxes, writing and shipping code. IBM (2025) describes this as the industry’s pivot from standalone generative tools toward systems that do.

The momentum is concrete. Deloitte forecasts that 50% of generative AI-using companies will deploy autonomous AI agents by 2027. McKinsey reports AI adoption has more than doubled in five years, with 88% of organisations now using AI in at least one business function as of 2025.

A few other shifts worth tracking:

For a practical follow-up on building reliable workflows, see how to use generative AI without hallucinations.

What is the difference between AI and generative AI?

AI is the broad field of machines performing tasks that normally require human intelligence, including classification, prediction, recommendation, and generation. Generative AI is a subset focused specifically on creating new content (text, images, audio, video, code) rather than analysing or sorting existing data. Every generative AI tool is AI; not every AI tool is generative.

Is generative AI the same as ChatGPT?

No. ChatGPT is one product built on OpenAI’s GPT family of generative models. Generative AI is the wider category; it includes ChatGPT, Claude, Gemini, Midjourney, Sora, Stable Diffusion, GitHub Copilot, and many more. ChatGPT is the most famous example, which is why people often use the names interchangeably, but the field is much broader.

Can generative AI replace human jobs?

Generative AI automates specific tasks within jobs more often than entire roles. McKinsey’s research suggests it will reshape work, particularly in writing, customer service, software, and design, but the pattern so far is augmentation: humans direct the AI, review outputs, and handle judgement-heavy work. Roles change; demand shifts toward people who can use these tools effectively.

Is generative AI safe and accurate?

It’s improving, but not flawless. Hallucinations, plausible-sounding but false outputs, remain the top risk, with 51% of organisations reporting inaccuracy issues (McKinsey, 2025). Safety improves with techniques like RLHF, RAG, and grounded prompting, but you should always verify critical facts, sources, and figures before relying on AI output for anything important.

How much does it cost to use generative AI tools?

Consumer tiers are cheap or free. ChatGPT, Claude, and Gemini all offer free plans, with paid tiers usually running $20/month. Open-source models like Llama 4 and Stable Diffusion are free to download and run locally if you have the hardware. Enterprise costs scale with API usage, fine-tuning, and infrastructure; frontier model training itself runs into the millions, but using these models is now affordable.